Missions

Missions

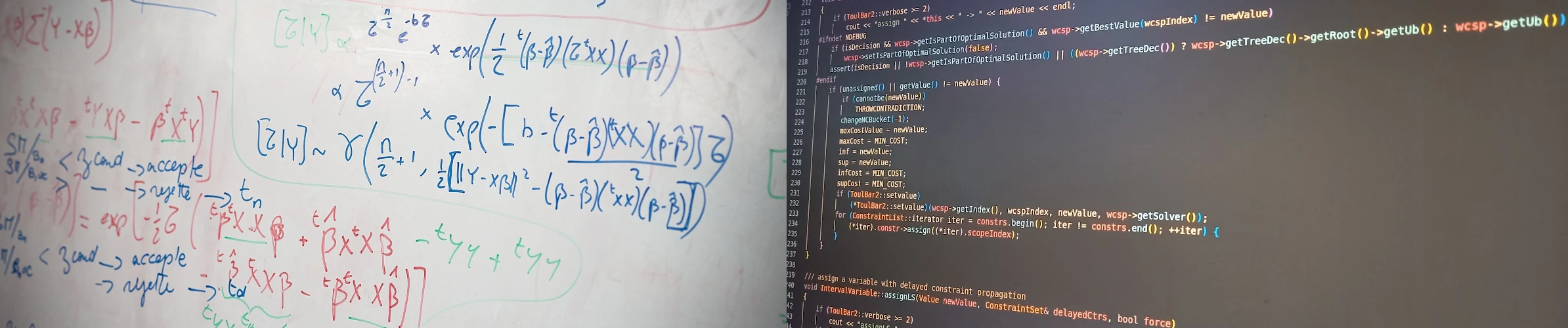

Relever le défi des sciences du vivant suppose de développer les relations entre calcul, modélisation et ces sciences (biologie, agronomie, écologie, sciences de l’environnement) afin de favoriser et d’accélérer les recherches exploratoires in silico sur des objets allant du gène à l’écosystème.

L’informatique, la statistique et, plus généralement, les mathématiques sont indispensables pour organiser les données sur ces objets et en permettre l’accès, représenter les modes d’organisation et simuler les interactions au sein de systèmes complexes qu’ils sont ou dans lesquels ils s’inscrivent, concevoir des outils formels et calculatoires d’analyse de données, de prédiction et d’optimisation.

Ces disciplines jouent un rôle fondamental dans la recherche de sens, dans la compréhension des fonctionnements des systèmes et dans le pilotage ou la gestion de ceux de nature anthropique. Ces besoins sont la source pour des chercheurs en mathématiques et informatique d’applications originales des outils de ces disciplines ainsi que des développements nouveaux.

La mission de l’unité est de contribuer à apporter une réponse à ces besoins. Ces recherches s’accompagnent d’une activité de production de logiciels pour leur valorisation et d’une activité de formation pour leur diffusion. L’unité poursuit une double ambition de production disciplinaire sur ses domaines de compétences et de production finalisée en construisant des projets et en collaborant avec des biologistes et des agronomes.

Organisation

Organisation

L’Unité de Mathématiques et Informatique Appliquées de Toulouse (MIAT) est une unité propre (UR875) du département MATHNUM d’INRAE.

Rejoignez-nous ! Voir nos offres d'emploi

Equipes de recherche

- SCIDYN (Simulation, Contrôle et Inférence de Dynamiques Agro-environnementales et Biologiques), ex équipe MAD (Modélisation des Agro-écosystèmes et Décision)

- SaAB (Statistique et Algorithmique pour la Biologie)

Equipes de service (plateformes)

- Genotoul-Bioinfo (Plateforme bioinformatique du GIS GENOTOUL - Génopole Toulouse Midi-Pyrénées)

- RECORD (Plateforme de modélisation et de simulation des agro-écosystèmes)

- SIGENAE (Plateforme “Systèmes d’information des génomes des animaux d’élevage)